Loading...

Loading...

Seedance 2.0 is built for stronger structural control and higher creative precision. It can generate videos by combining text, images, video clips, and audio as references. It emphasizes character and object consistency, more realistic physical motion, and complete control from creative replication to forward and backward video extension, making it a strong fit for teams and creators who want a more professional video-generation workflow.

Seedance 2.0 focuses on multimodal references, realistic motion, visual consistency, creative replication, storyboard understanding, and seamless video extension.

By uploading combined assets such as character images, background videos, or audio tracks, Seedance 2.0 can compose them more precisely. It understands which details from the reference materials should be preserved, from lighting mood to complex character motion, and transfers them more accurately into the final video.

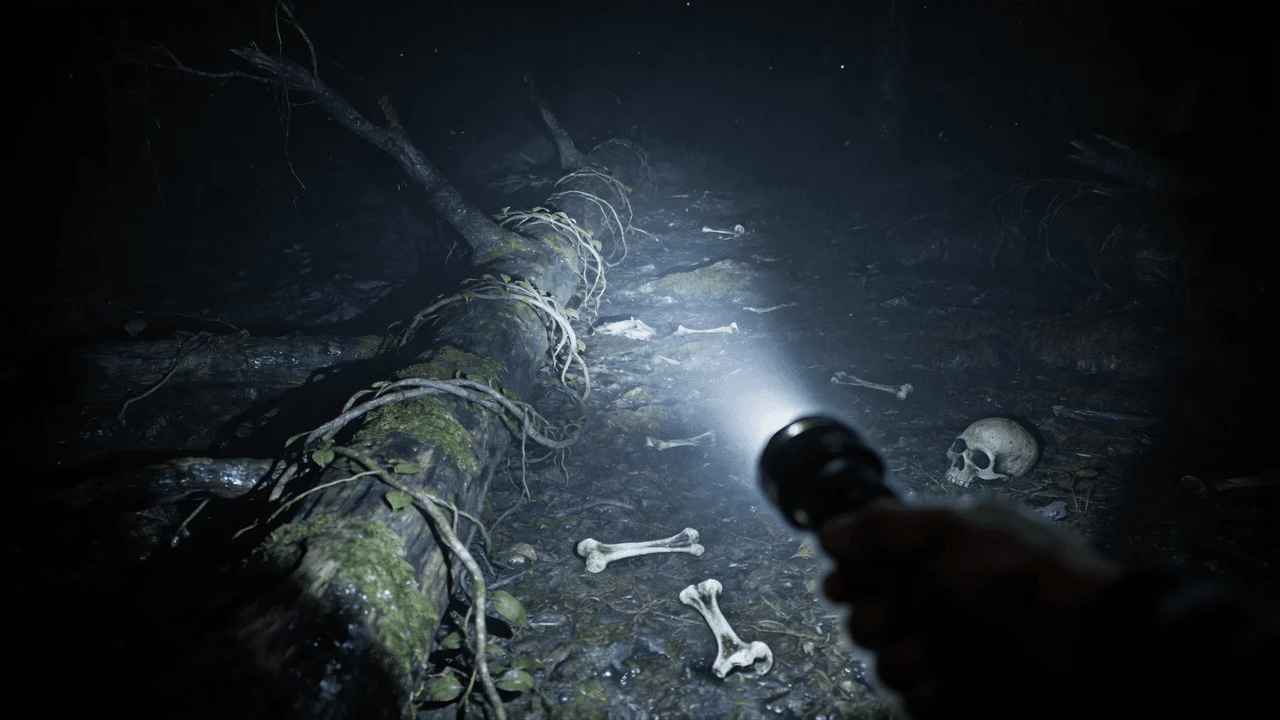

Version 2.0 significantly improves motion and physical realism. From water flow to hair movement, each dynamic detail looks more stable, fluid, and believable. Even without complex references, basic text-to-video outputs can look much more polished and professional.

A common problem in AI video is drift between shots. Seedance 2.0 addresses this by locking in key elements, helping preserve details from product labels to clothing features across the entire clip while reducing flicker and unwanted deformation.

Seedance 2.0 can mimic the pacing and structure of a reference video, recognizing camera language, transitions, and visual organization so you can recreate more professional video expression without relying on complex production terminology.

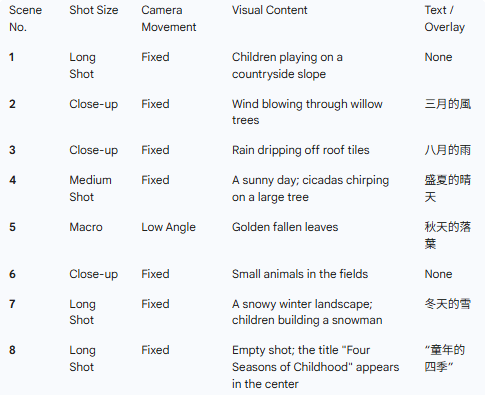

Seedance 2.0 has a deeper understanding of narrative flow and scene logic. It does not just generate isolated clips. It can organize shots based on complex storyboards and detailed prompts, producing results that feel closer to a complete narrative sequence.

With enhanced video extension, Seedance 2.0 can analyze existing footage and generate a natural continuation. Whether you want to add context before a moment or extend a scene after its climax, it does a better job of preserving environmental and character continuity.

From creative input to parameter setup, AI generation, and final export, Seedance 2.0 offers a more complete video-generation workflow.

Enter a detailed prompt or upload a reference image into Seedance 2.0. You can describe characters, scenes, camera angles, and motion so the model can understand more director-level instructions and produce more precise scene composition.

Choose the right resolution, aspect ratio, and video duration. Seedance 2.0 offers more flexible output controls so you can match platform requirements and creative goals.

Let Seedance 2.0 generate the result. The model produces high-fidelity video frames with more realistic motion, more coherent multi-shot sequences, and a more complete end-to-end generation workflow.

Preview the generated result and download an MP4 file that is ready to use. The video can be shared on social platforms or brought into your professional production workflow.

These questions and answers are adapted from the Seedance 2.0 page and focus on multimodal references, consistency, commercial usage, video editing, physical realism, and storyboard understanding.

Seedance 2.0 stands out for its multimodal reference capability. While many models mainly accept text or a single image, it can also read video and audio to replicate specific motion, pacing, and style, giving creators a higher degree of control.

The model uses stronger spatiotemporal logic to keep characters and environments stable, helping reduce drift in faces, clothing, and background details from frame to frame. That makes it a better fit for professional storytelling workflows that require strong consistency.

Yes. It can preserve product details while replicating high-end editing styles such as transitions and camera motion, which makes it well suited for professional marketing content, brand ads, and product demos.

Yes. You can upload a reference video and combine it with text prompts to change style, characters, or settings while preserving the original motion and camera language as much as possible, making it suitable for video-to-video style creation.

The page emphasizes stronger physical understanding, allowing the model to better handle real-world dynamics such as gravity, friction, fluid motion, and object interaction. As a result, hair movement, flowing water, and physical contact can feel heavier and more believable.

Bidirectional extension means you can continue a clip forward or add what happened before it. This makes it easier to build fuller narrative context while preserving environmental, character, and camera continuity as much as possible.

Yes. You can upload a still image of a character or product, then provide a separate video reference so the model can learn the motion style or camera rhythm and apply that dynamic behavior to the static subject while preserving the original visual details.

Seedance 2.0 has stronger script and storyboard understanding, allowing it to interpret structured shooting scripts or storyboard images while understanding camera angles, lighting mood, and narrative progression. That makes it more suitable for multi-shot sequences aligned with professional filmmaking workflows.

If you want to combine text, image, video, and audio references in a more professional video workflow while gaining more control over consistency, motion quality, and video extension, start with Seedance 2.0.